Developing a Virtual Simulator for Laparoscopic Surgery

This article reports on a project to develop a virtual simulator to train residents and evaluate their skills, as they perform virtual procedures in laparoscopic surgery. Kitware leads this project in partnership with top research universities and a small business.

Minimally invasive surgery, such as laparoscopic surgery, has revolutionized surgical treatment. Performing laparoscopic surgery, however, comes with a steep learning curve and calls for extensive training. To teach and evaluate the cognitive and psychomotor aspects that are unique to laparoscopic surgery, a joint committee of Society of American Gastrointestinal and Endoscopic Surgeons (SAGES) members and American College of Surgeons (ACS) members created the Fundamentals of Laparoscopic Surgery (FLS) program. The FLS program has a high-stakes cognitive-and-skill-assessment component. For this component, trainees utilize a mechanical training toolbox to perform five tasks. These tasks require trainees to transfer objects onto pegs, cut precise patterns, complete loop ligation, suture with an extracorporeal knot, and suture with an intracorporeal knot, respectively.

While the mechanical training toolbox can help evaluate skills in laparoscopic surgery, SAGES personnel must hand-score the test materials to determine how well residents perform tasks. This requirement lengthens the time it takes to provide feedback to trainees, which adds large costs to the certification program and subjectivity to the overall scores. In addition to hand-scoring the tests, SAGES personnel must replace the materials after every use.

To overcome the drawbacks of the mechanical training toolbox, Rensselaer Polytechnic Institute (RPI) developed a simulator, which it named Virtual Basic Laparoscopic Skill Trainer (VBLaST) [1]. In 2015, Kitware received funding from the National Institute of Biomedical Imaging and Bioengineering (NIBIB) of the National Institutes of Health (NIH) to work in close collaboration with RPI, Buffalo General Medical Center at the University of Buffalo, and SimQuest to extend and improve VBLaST. Andinet Enquobahrie, Ph.D, MBA, assistant director of medical computing at Kitware, leads the project.

This article provides background information on VBLaST [2] and describes accomplishments from the first year of the project. The article also discusses plans for efforts to develop and test VBLaST. These efforts will optimize the effectiveness of the simulator and allow SAGES personnel to better educate and evaluate trainees.

Working to replace the Mechanical Training Toolbox

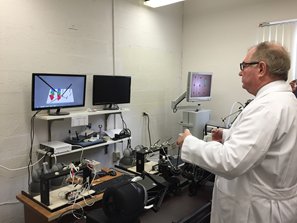

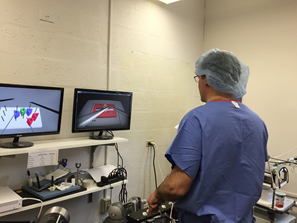

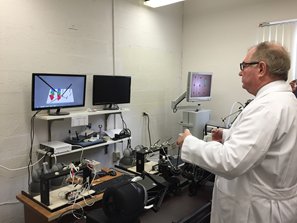

Professor Suvranu De guided the creation of VBLaST at RPI under a Research Project Grant from the NIH (R01 EB010037) [3]. As the following images depict, VBLaST virtualizes all five tasks of the mechanical training toolbox through the use of advanced interactive, multimodal simulation technology.

VBLaST replaces the test materials of the mechanical toolbox with virtually simulated objects. Trainees perform the FLS program tasks with actual laparoscopic instruments, which connect to haptic devices. The hardware interface of VBLaST tracks the motions of the instruments, and physics-based algorithms accurately simulate the interaction between the virtual instruments and the objects in the scene in real time. As this interaction occurs, VBLaST displays realistic visuals and provides appropriate haptic feedback. VBLaST also logs real-time performance data such as instrument motion, path length, and total time.

The VBLaST prototype facilitates the development of a training and certification platform, and it serves to validate the virtual FLS concept. For the NIH project, Kitware and collaborators are working to extend and improve this prototype so that they can deploy it in teaching hospitals and testing centers in clinical settings. The goals of the project are to incorporate two new tasks into VBLaST (camera navigation and cannulation), to build a robust haptic interface, and to improve the overall quality of the VBLaST software.

Employing A Simulator for Camera Navigation

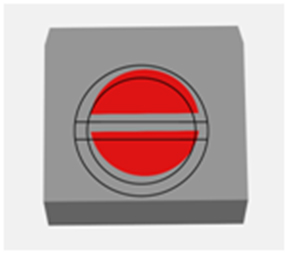

The team has already worked to develop a camera-navigation simulator in VBLaST. The camera-navigation simulator trains residents to master the use of laparoscopic cameras in operating rooms to improve their spatial awareness and positional accuracy. As the below image illustrates, the simulator arranges targets in a circle and overlays a marker pattern on the camera lens. Trainees must match the pattern with each target. Trainees can manipulate the position and orientation of the camera through movement, rotation, and articulation.

The development team chose the Visualization Toolkit (VTK) as the rendering engine for the simulator. The team also developed an automated scoring mechanism. The mechanism bases scores on the accuracy of the positional and angular alignment of the marker.

Designing a New Hardware Interface

The team also designed a new interface with haptic devices to improve the experimental hardware in VBLaST. SimQuest led the design of this hardware interface. To create the new interface, SimQuest added linkages and attachments to commercially available Novint Falcon haptic devices, which have update rates of up to 2,500 hertz. The additions helped to improve the workspace of VBLaST and facilitate the use of laparoscopic tool handles. The additions also helped to extend the force capability of VBLaST to a range of 3.4 newtons to 12 newtons.

The new-and-improved interface allows for seven degrees of freedom: three in movement, three in rotation, and one in the open-close movement of the laparoscopic tools. The team has integrated this interface with the peg-transfer task and will test it at the University of Buffalo.

Building a High-quality Software Infrastructure

Graduate students at RPI helped to develop the original VBLaST software. To prepare the software for commercial use at clinical facilities, the Kitware team improved the software process, streamlined the build process, adopted resource management tools, and incorporated best practices into VBLaST software development. As part of the effort, the team rolled out revision control systems and adopted a high-quality software development workflow. This workflow includes a stringent testing suite; a continuous integration system that employs CMake, CTest, and CDash; a Web-based code review system; and a protocol to accept patches into the main code repository. The team established this software infrastructure to facilitate remote collaboration between members.

Enhancing VBLaST

The project is off to a great start, and the team has several tasks planned for the second year. The following offers a high-level summary of upcoming tasks.

Continuing to Develop Virtual Simulation Environments

The team will obtain feedback from clinical collaborators on the camera-navigation simulator. The team will then finalize the development of the simulator and prepare it for statistical validation. During the first half of the second year, the team will also develop capabilities for a cannulation task.

Creating an Automated Scoring System

The team will develop a novel automated system to score trainees based on the simulation data that VBLaST records. Such data includes various metrics that are common to the FLS tasks like path length, jerk analysis, and total time. For the knot-tying tasks, the team will develop algorithms to precisely measure the tightness and placement of the knots. The algorithms will use force measurements to determine the circumferences of the knots and compare the knots with pre-defined thresholds.

The team will develop additional metrics for camera navigation that measure hand steadiness and spatial awareness. The VBLaST software will use the recorded metrics to arrive at the final scores, and it will not require any manual intervention.

Improving the Haptics Hardware Design

Currently, the haptics hardware can render forces in addition to torques about three Cartesian axes. The team will test this hardware to see if it can meet the needs of VBLaST in terms of haptic fidelity. The team will also ensure that the hardware is production-ready with regard to ease of use, robustness, manufacturability, and cost.

Dr. Schwaitzberg evaluates the simulator at Buffalo General Medical Center.

Conducting Extensive Validation Studies

The team has already started to collect feedback and to evaluate the face, content, and construct validity of VBLaST. To further evaluate VBLaST, members of the department of surgery at the University of Buffalo will conduct a study under the supervision of Dr. Steven Schwaitzberg. Dr. Schwaitzberg is a professor in and the chairman of the department. The study will measure the effectiveness of VBLaST to train and assess surgical residents, as they perform virtual

laparoscopic surgery.

Summary

The project focuses on the development of a virtual FLS platform to accelerate laparoscopic surgical education and evaluation. The work offers just one example of how Kitware partners with top research universities and technology companies to build powerful platforms for surgical training and certification through the use of open-source solutions.

To learn more about medical computing expertise at Kitware, please go to http://www.kitware.com/solutions/medicalimaging/medicalimaging.html. To find out how Kitware can tailor its solutions to meet research and educational needs, please contact kitware@kitware.com.

Acknowledgement

The authors would like to acknowledge the following members of the project: Suvranu De, Woojin Ahn, Trudi Qi, and Karthik Panneerselvam from RPI; Ryan Beasley and Tim Kelliher from SimQuest; and Steven Schwaitzberg, Lora Cavuoto, and Nargis Hossian from the University of Buffalo.

Research reported in this publication was supported by the National Institute Of Biomedical Imaging And Bioengineering of the National Institutes of Health under Award Number R44EB019802. The content is solely the responsibility of the authors and does not necessarily represent the official views of the National Institutes of Health.

References

[1] Arikatla, Venkata Sreekanth, Ganesh Sankaranarayanan, Woojin Ahn, Amine Chellali, Caroline G. L. Cao, and Suvranu De. “Development and Validation of VBLaST-PT© : A Virtual Peg Transfer Simulator.” Studies in Health Technology and Informatics 184 (2013): 24-30.

[2] Lee, Jason, Woojin Ahn, and Suvranu De. “Development of Extracorporeal Suturing Simulation in Virtual Basic Laparoscopic Skill Trainer (VBLaST),” Studies in Health Technology and Informatics 196 (2014) 245-247.

[3] Chellali, Amine, Woojin Ahn, Ganesh Sankaranarayanan, Jeff Flinn, Steven D. Schwaitzberg, Daniel B. Jones, Suvranu De, and Caroline G. L. Cao. “Preliminary Evaluation of the Pattern Cutting and the Ligating Loop Virtual Laparoscopic Trainers.” Surgical Endoscopy 29 (2015) 815-821.

Andinet Enquobahrie is an assistant director of medical computing at Kitware. As a subject matter expert, he is responsible for the technical contribution and management of projects that involve image-guided intervention and surgical simulation.

Andinet Enquobahrie is an assistant director of medical computing at Kitware. As a subject matter expert, he is responsible for the technical contribution and management of projects that involve image-guided intervention and surgical simulation.

Sreekanth Arikatla is a senior research and development engineer at Kitware. His interests include numerical methods, computational mechanics, computer graphics, and virtual surgery.

Sreekanth Arikatla is a senior research and development engineer at Kitware. His interests include numerical methods, computational mechanics, computer graphics, and virtual surgery.

I am glad to read your post. It’s very informative.

Thank you