Introducing Interactive Medical Simulation Toolkit: Part II

Following our introduction to the interactive Medical Simulation Toolkit (iMSTK)—in which we highlight how to download iMSTK, build the software and run the “Hello World” example—we discuss four medical simulation applications that use iMSTK.

Camera Navigation

The camera navigation simulator trains users to master the use of laparoscopic cameras in operating rooms. It is designed to help them improve their skills in endoscopic operations, spatial awareness, and camera positioning. As the video shows, the simulator arranges targets in a circle and overlays an alignment pattern on the camera lens. Users must match the pattern with each target. They can manipulate the position and orientation of the camera through tilt movements, camera head rotation, and scope angulation.

We built the camera navigation simulator entirely on iMSTK, with the Visualization Toolkit (VTK) as the rendering backend. iMSTK’s Hardware module tracks the tip of the endoscope through a haptic device interface. The CameraController class uses the tracking data to drive VTK’s camera object. Further, the simulator leverages VTK’s utilities for 2D and 3D projections to compute performance metrics. At the end of the task, users are presented with visualizations of the camera path and values from various metric calculations. For additional reading, please see our related work, which was published in Surgical Endoscopy [1].

Osteotomy Trainer

The osteotomy trainer simulates the bone cutting aspect that is common to many procedures such as bilateral sagittal split osteotomy (BSSO) and temporal bone surgery. More specifically, the trainer simulates the cut of the bone under an oscillating blade saw at high speeds. The fully immersive VR experience is made possible through the VTK rendering backend in iMSTK.

iMSTK’s Hardware module supports the GeoMagic Touch device, through which the blade saw is tracked and the forces are rendered. The topological changes that result from the cut are implemented using VTK’s visibility functionality. Please read “High Fidelity Virtual Reality Orthognathic Surgery Simulator” [2] for more details.

Kidney Biopsy Virtual Trainer

We developed Kidney Biopsy Virtual Trainer (KBVTrainer) to help clinicians improve procedural skill competence in real-time ultrasound-guided renal biopsy. In the process, we interfaced iMSTK and 3D Slicer for the first time.

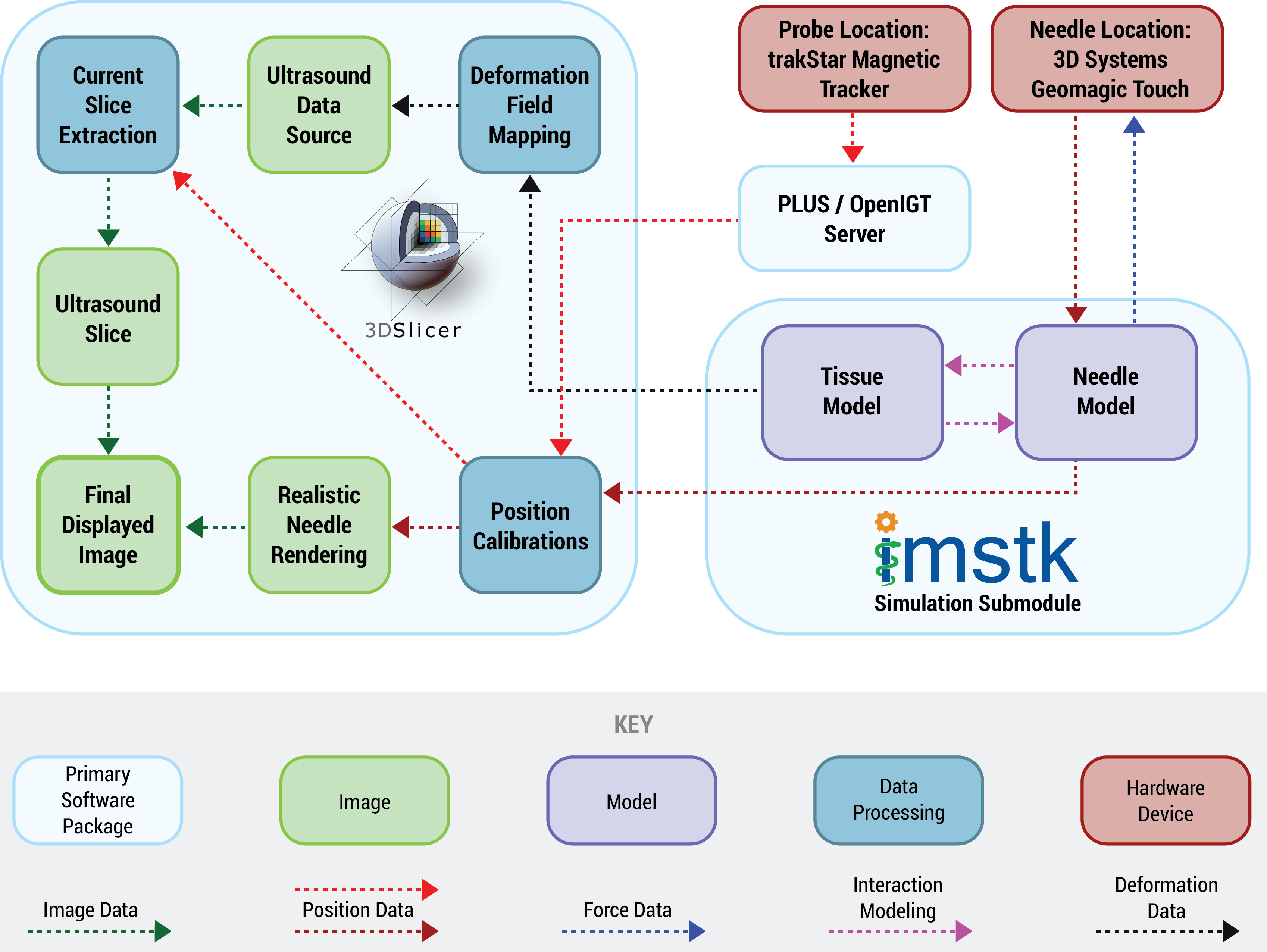

As the flowchart below shows, iMSTK’s Hardware module tracks the haptic device in the trainer workspace. 3D Slicer handles the magnetic tracking through its integration with the Plus library. iMSTK computes the kidney tissue deformation and its interaction with the needle, passing the information to 3D Slicer at every rendering frame. The transmitted data includes volumetric nodal deformation and forces on the needle. 3D Slicer displays the deformed kidney overlay using its rendering window. To learn about the research methodology, please read “Towards an advanced virtual ultrasound-guided renal biopsy trainer” [3].

Virtual Airway Skill Trainer

Virtual Airway Skill Trainer (VAST), developed by our collaborators at CeMSIM (RPI), provides a virtual and risk-free environment to train clinicians how to perform airway management procedures such as cricothyrotomy and endotracheal intubation. VAST simulates the VR experience and can also provide haptic feedback.

To achieve realistic rendering for the tools and the skin surface, iMSTK uses physically based rendering within the Vulkan rendering backend. Please visit VAST’s project page to learn more about the trainer.

Future work

We will continue to support the developers and end users of iMSTK. In the coming years, for example, we will work to broaden the scope of the applications that are possible with iMSTK. In order to realize this, we will interface iMSTK with 3D Slicer (in a comprehensive manner), Pulse physiology engine and Computational Model Builder. These are all open source software that we lead in developing and maintaining. In addition, we plan to add critical features to iMSTK such as parallel architecture and advanced collision handling algorithms.

Acknowledgment

Research reported in this publication was supported, in part, by the National Institute of Biomedical Imaging And Bioengineering of the National Institutes of Health (NIH) under Award Number R44EB019802; by the National Institute of Diabetes and Digestive and Kidney Diseases of the National Institutes of Health under Award Number R43DK115332; by the Office Of The Director, National Institutes Of Health of the National Institutes of Health under Award Number R44OD018334; by the National Institute of Dental & Craniofacial Research of the National Institutes of Health under Award Number R43DE027595, and by National Heart, Lung, and Blood Institute under the Award Number R01HL119248.

The content is solely the responsibility of the authors and does not necessarily represent the official views of the NIH and its institutes.

References

- Arikatla, Venkata S., Sam Horvath, Yaoyu Fu, Lora Cavuoto, Suvranu De, Steve Schwaitzberg, and Andinet Enquobahrie. “Development and face validation of a virtual camera navigation task trainer.” Surgical Endoscopy, (2018): 1-11. doi: 10.1007/s00464-018-6476-6. Accessed December 21, 2018.

- Arikatla, Venkata S., Mohit Tyagi, Andinet Enquobahrie, Tung Nguyen, George H. Blakey, Ray White, and Beatriz Paniagua. “High Fidelity Virtual Reality Orthognathic Surgery Simulator.” Proceedings Volume 10576, Medical Imaging 2018: Image-Guided Procedures, Robotic Interventions, and Modeling. 2018. doi: 10.1117/12.2293690.

- Horvath, Samantha, Arikatla, Venkata S., Kevin Cleary, Karun Sharma, Avi Rosenberg, and Andinet Enquobahrie. “Towards an advanced virtual ultrasound-guided renal biopsy trainer,” Paper to be presented at SPIE Medical Imaging 2019, San Diego, CA, February 2019.

Hello everybody,

That’s an awesome toolkit for developing applications of virtual simulation aimed at medical training.

Can we find somewhere an example of the use such toolkit ready to be run? Either similar to those shown in this post or even more simple.

Thanks a lot in advance !!!!!

Thank you for your feedback. All the examples shown unfortunately require external hardware (seen in the video) to run. You can however download the code and build using the instructions at https://gitlab.kitware.com/iMSTK/iMSTK/

Once built, you should be able to run and test the examples that ship with the code. Please let us know if you have questions regarding this.

You can post your questions or start a discussion at our discourse page: https://discourse.kitware.com/c/imstk

This technology coupled with robotics and machine learning and would go a long way. This means that in future, robots could perform emergency life saving procedures of casualties where medical staff is overburdened or in shortage, among other possible applications