Ultrasound Research: Notes from MICCAI 2017

October 6, 2017

Research and applications that pair ultrasound imaging with deep learning, image processing, and/or statistical modeling were featured in numerous presentations at the Medical Image Computing and Computer-Assisted Intervention (MICCAI) 2017 conference. The three-volume conference proceedings are available from the conference website and from Springer. In this blog we present a brief summary of our notes from reviewing the ultrasound papers in those proceedings. We hope you find these papers to be as inspirational for your work as they have been for ours:

- MICCAI 2017, Vol 1

- A 3D Femoral Head Coverage Metric for Enhanced Reliability in Diagnosing Hip Dysplasia (p. 100)

- Segment the femoral head in 3D ultrasound using voxel-wise probability map.

- Applied to infant hips in which the femoral head is unossified.

- Integrating Statistical Prior Knowledge into Convolutional Neural Networks (p. 161)

- Uses a coarse network to identify key points using a PCA shape representation, and a fine-grain network to segment around those key points.

- Applied to ultrasound of heart left ventricle for segmentation and to ultrasound of heart for landmark identification.

- Learning and Incorporating Shape Models for Semantic Segmentation (p. 203)

- Includes shape priors within the U-Net segmentation framework. Loss function is adapted to include a shape prior. In this case, uses an auto-encoder to learn to reproduce shape, so output of u-net is projected onto auto-encoder space to map/limit to a learned shape representation.

- Applied to ultrasound kidney segmentation

- Towards Automatic Semantic Segmentation in Volumetric Ultrasound (p. 711)

- Uses a Unet to create voxel-wise probability images and a time-sequence of those probability maps are analyzed by a recurrent (bi-directional long-short term memory) network to determine a labeled image. Uses modified deep learning (auxiliary classifiers on intermediate convolutional layers)

- Applied to 3D fetal ultrasound to identify fetus, sac, and placenta

- A 3D Femoral Head Coverage Metric for Enhanced Reliability in Diagnosing Hip Dysplasia (p. 100)

- MICCAI 2017, Vol II

- Flow Network Based Cardiac Motion Tracking Leveraging Learned Feature Matching (p. 279)

- A flow network is a graph with nodes on cardiac surface at one time point that are linked to potentially corresponding surface positions at the next time point – goal: determining time-series point-cloud correspondence. Edge weights are learned using a Siamese network trained using simulated image patches.

- Applied to left ventricle motion analysis

- Detection and Characterization of the Fetal Heartbeat in Free-hand Ultrasound Sweeps with Weakly-supervised Two-streams Convolution Networks (p. 305)

- Custom 2-stream architecture uses 3 consecutive images to estimate optical flow in one stream and the second stream combines image features with optic flow estimates to create a multi-class labelmap (fetal skull, abdomen, heart, and background).

- Applied to detecting fetal heart in ultrasound

- Learning-Based Spatiotemporal Regularization and Integration of Tracking Methods for Regional 4D Cardiac Deformation Analysis (p. 323)

- Input 4D Lagrangian displacement patches to a neural network which learned regularization, with consideration for returning identity if patch was already smooth.

- Applied to myocardium from ultrasound

- Temporal HeartNet: Towards Human-Level Automatic Analysis of Fetal Cardiac Screening Video (p. 341)

- Estimates heart visibility, viewing plane, location, and orientation using CNNs linked by recurrent connections across corresponding convolutional layer locations. Features are aggregated in a window using circular anchors of four different sizes, with highest classification score used to select anchor of interest. Optimizes Intersection of Union loss for learning localization, and cosine loss for learning orientation.

- Applied to US video of fetal hearts

- Analysis of Periodicity in Video Sequences Through Dynamic Learning Modeling (p. 386)

- Model quasi-periodic signal using nested dynamic linear models + random walk and noise. Likelihood ratio estimates used to identify regions of periodicity.

- Applied to dural pulsation in lumbar ultrasound

- Real-Time 3D Ultrasound Reconstruction and Visualization in the Context of Laparoscopy (p. 602)

- Perception study on the presentation of deep-seated, hidden surgical targets localized via ultrasound. Includes method for fast 3D reconstruction of a rectilinear volume from tracked, freehand US. Visualization is 3DUS with opacity as a function of distance from center.

- Applied to data from a PVA-C phantom

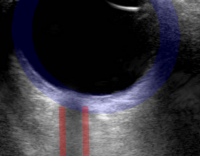

- Improving Needle Detection in 3D Ultrasound Using Orthogonal-Plane Convolutional Networks (p. 610)

- Center orhto planes on each voxel for labeling by CNN.

- Applied to short needles (early insertion to verify path) using chicken breast

- Motion-Compensated Autonomous Scanning for Tumour Localisation Using Intraoperative Ultrasound (p. 619)

- Robot controlled ultrasound scanning in the presence of tissue motion, e.g., respiration. Learned via a vision system, with motion modeled via PCA of stereo tracked points.

- Applied to simulated and ex vivo laparoscopic data (liver)

- Deep Learning for Sensorless 3D Freehand Ultrasound Imaging (p. 628)

- Input consecutive images and estimated in-plane displacement fields for those images into a CNN.

- Applied to long ultrasound sweeps for vein mapping (7% drift, degrades on different anatomy)

- Ultrasonic Needle Tracking with a Fibre-Optic Ultrasound Transmitter for Guidance of Minimally Invasive Fetal Surgery (p. 637)

- Pulsed light from needle tip generates a photoacoustic ultrasonic wave that can be tracked.

- Applied to needle insertion in animal models.

- Precise Ultrasound Bone Registration with Learning-Based Segmentation and Speed of Sound Calibration (p. 682)

- FCNN (Unet) for real-time bone surface estimation from multiple steered ultrasound frames. Estimate speed of sound and correct.

- Applied to human femur segmentation and registration with CT

- Flow Network Based Cardiac Motion Tracking Leveraging Learned Feature Matching (p. 279)

- MICCAI 2017, Vol III

- Boundary Regularized Convolutional Neural Network for Layer Parsing of Breast Anatomy in Automated Whole Breast Ultrasound (p. 259)

- Identification of subcu fat, parenchyma, pect muscle, and chest wall layers using deep convolutional encoder-decoder network with deep boundary supervised training

- Applied to automated ultrasound breast scans

- Using Recurrent Neural Networks: Feasibility on Five Standard View Planes (p. 302)

- Image quality assessment using CNNs (VGG network). Assessed five planes simultaneously.

- Applied to 3D cardiac ultrasound

- Supervised Action Classifier: Approaching Landmark Detection as Image Partitioning (p. 338)

- Learn (using FCNN/SegNet) to partition image into regions left, right, above, and below the learned landmark – ambiguities resolved via smooth distance map

- Applied to cardiac and obstetric ultrasound in 2D.

- Clinical Target-Volume Delineation in Prostate Brachytherapy Using Residual Neural Networks (P. 365)

- Uses a ResNet with upsampling and training map weighted by distances from prostate boundary.

- Applied to transrectal ultrasound of the prostate

- Suggestive Annotation: A Deep Active Learning Framework for Biomedical Image Segmentation (p. 399)

- Determine what instances to annotate to best improve performance using estimates of uncertainty and similarity computation at intermediate layers.

- Applied to lymph node segmentation from ultrasound

- Boundary Regularized Convolutional Neural Network for Layer Parsing of Breast Anatomy in Automated Whole Breast Ultrasound (p. 259)